From AI-Generated Tests to AI-Governed Test Design: Rethinking Quality Engineering

- Govindarajan Muthukrishnan

- 15 min read

- January 22, 2026

Introduction

Many teams today use AI tools to generate test cases automatically from user stories or prompts. While this accelerates test creation, it also introduces new risks: unstructured outputs, inconsistent coverage, and limited traceability. More importantly, trusting AI without defined boundaries creates governance and quality risks.

When test logic cannot be clearly validated, when pass–fail conditions are ambiguous, or when edge cases outweigh standard scenarios, AI-generated outputs become difficult to govern and unsafe to productionize. Human judgment remains essential, and AI must operate within structured constraints, not as an unconstrained generator.

This blog explains why structured, methodology-driven AI for test authoring matters more than raw automated test case generation and how context-aware, governed AI improves test quality, reliability, and enterprise readiness in high-change environments.

AI-Generated Testing

The Rise of AI-Generated Test Cases

AI adoption in quality engineering has accelerated rapidly. Teams increasingly rely on LLMs, IDE copilots, and prompt-based tools to generate test cases in seconds. At first glance, this feels like progress: manual effort is reduced, test volumes increase, and velocity improves.

However, speed alone does not guarantee quality. Generating test cases quickly is not the same as generating the right test cases. Without structure and context, AI-generated tests often mirror the same problems seen in ad-hoc manual testing: missed edge cases, inconsistent depth, and unclear rationale.

Today, the challenge is no longer speed, but reliable, explainable test design at scale.

Why Ungoverned AI Fails

Why Unstructured AI Test Case Generation Fails

Most generic AI-based test generation follows a prompt → output model, sometimes augmented with URLs or isolated knowledge sources. While fast, this approach introduces critical limitations.

1. No Persistent Product Context

Generic AI tools operate statelessly. They generate tests based only on the current prompt, without retaining system architecture, workflows, integrations, or historical decisions. This results in shallow, feature-isolated coverage.

Example: In an AI-based market-facing product, AI-generated test suites focused only on the current user story. Because persistent product context was missing, the tests validated features in isolation and failed to capture downstream impacts across workflows, integrations, and dependent modules. These issues surfaced later during integration and UAT, increasing rework.

2. Inconsistent Test Design

Without a governing framework, two testers prompting the same user story often receive vastly different outputs. There is no guarantee of completeness, prioritization, or consistency across teams.

Example: In a Prop-Tech engagement, one tester received only happy-path scenarios from an AI-generated suite, while another received a large set of edge cases. Neither received a complete, risk-balanced test set for the same story. Coverage became unpredictable, making release decisions harder.

3. Lack of Risk-Based Thinking

Most AI-generated tests focus on functional scenarios and happy paths. Business risk, failure modes, and operational edge cases are rarely prioritized unless explicitly engineered into prompts, which does not scale for large platforms such as ERPs or financial systems.

Example: In a retail POS implementation, AI-generated tests missed high-risk scenarios such as partial payments, retries, and integration failures because these risks were not explicitly stated in prompts. Prompting at module or function level did not scale for a large ERP-like product with complex workflows.

4. Black-Box Outputs

Many AI-based test generation tools produce test cases without revealing why a test exists, which risk it addresses, or what coverage is missing. This lack of transparency undermines reviews, audits, and governance, especially in enterprise programs.

Example: In a Prop-Tech program using Jira-based AI test generation, test cases lacked visibility into risk mapping and test design rationale. Without clear links between business risks, test goals, and coverage strategy, test reviews became subjective and inconsistent.

Structured AI Approach

What Structured AI Means in Test Authoring

Structured AI shifts the focus from generating tests faster to designing tests reliably.

1. Methodology-Driven Authoring with Governance

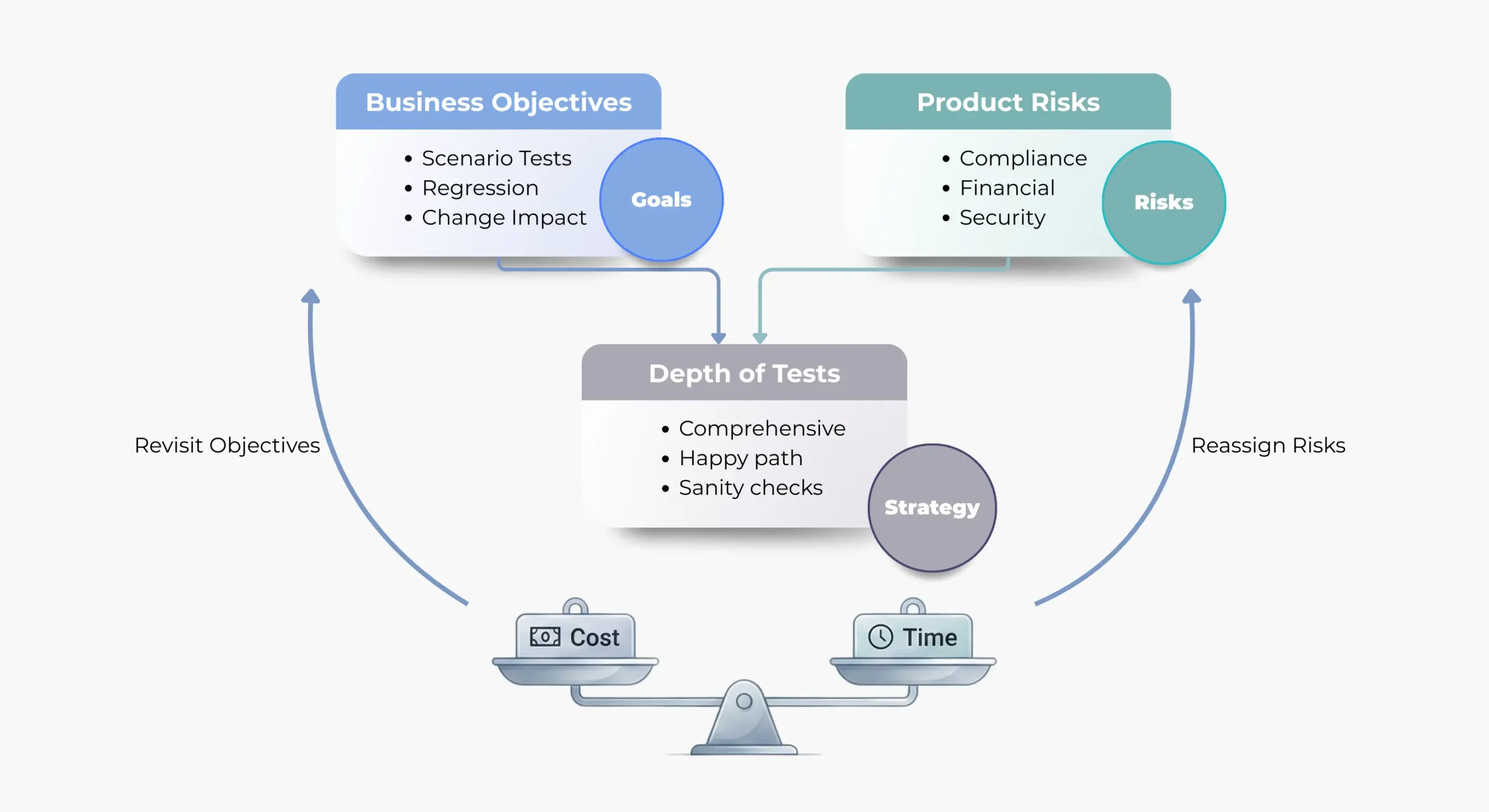

Structured AI follows proven testing methodologies such as risk-based testing, TMap®, etc in defining:

- Test goals aligned to business risk

- Coverage rationale and prioritization

- Explicit test strategies and techniques

This ensures predictability, repeatability, and auditability in test design.

Example: In a Prop-Tech project using a custom-built AI solution aligned to risk-based methods, test depth was directly mapped to business impact. Teams achieved predictable coverage and a balanced trade-off between quality, time, and cost.

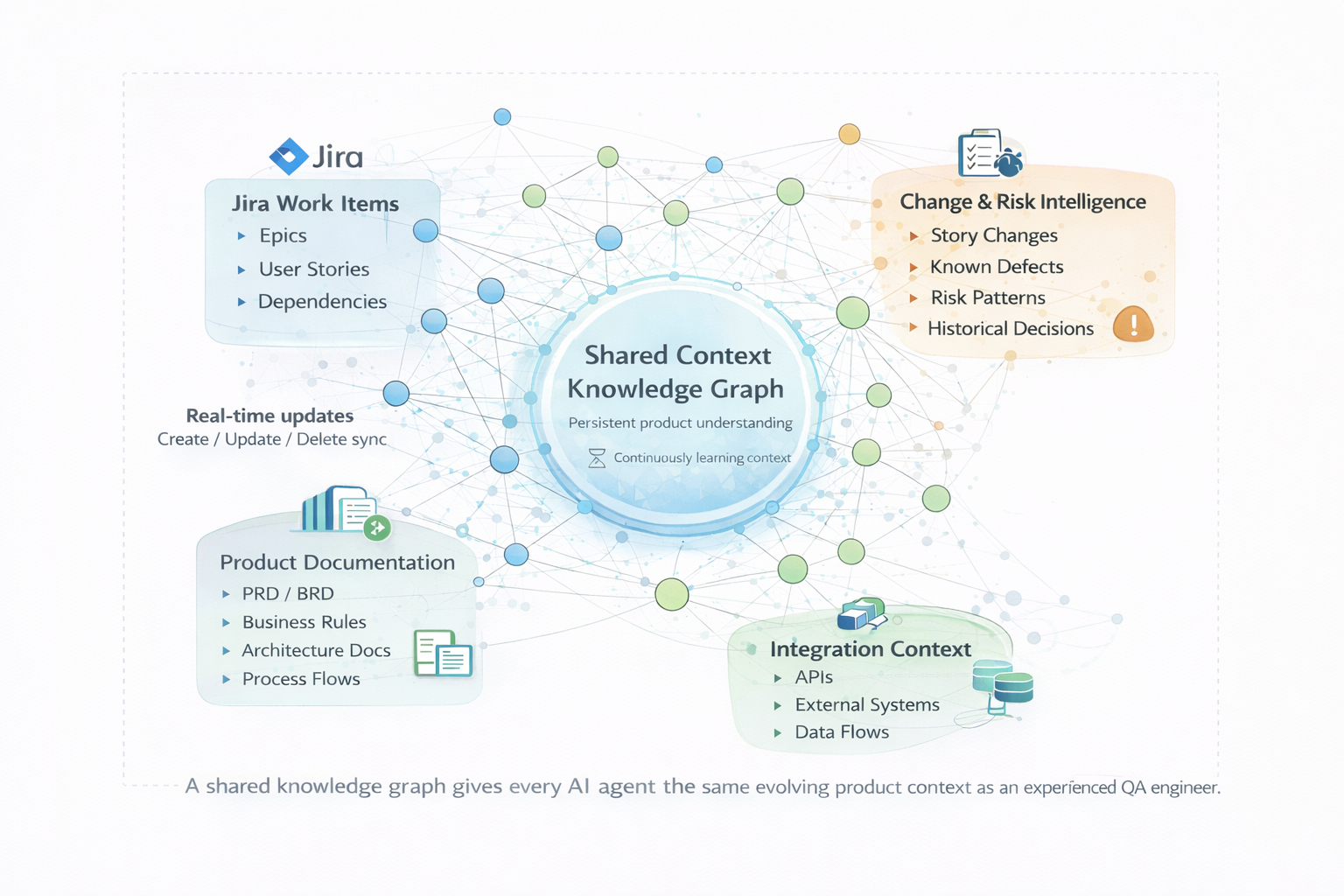

2. Context-Aware Test Generation

Instead of relying on a single prompt, structured AI uses a persistent knowledge layer comprising user stories, workflows, architecture diagrams, integrations, and prior changes.

This allows AI to generate tests with the same system-level understanding that experienced QA engineers rely on, improving coverage consistency and reducing downstream rework.

Example: In a large enterprise workflow and compliance platform, senior QA engineers relied heavily on institutional knowledge to design meaningful tests. Earlier AI-generated tests lacked this context. Once test authoring agents were made context-aware, AI began identifying downstream impacts and edge cases upfront, reducing rework and improving coverage consistency.

3. Transparent Test Strategy

Structured AI makes the test strategy visible by exposing:

- Risk-to-test-goal mappings

- Coverage maps and completeness scoring

- Test techniques applied and rationale

Teams can see what is being tested, why it matters, and what remains uncovered before execution begins.

Example: In a retail POS system, teams could trace every test case back to its risk, test goal, and chosen technique. This transparency improved review quality and strengthened release confidence.

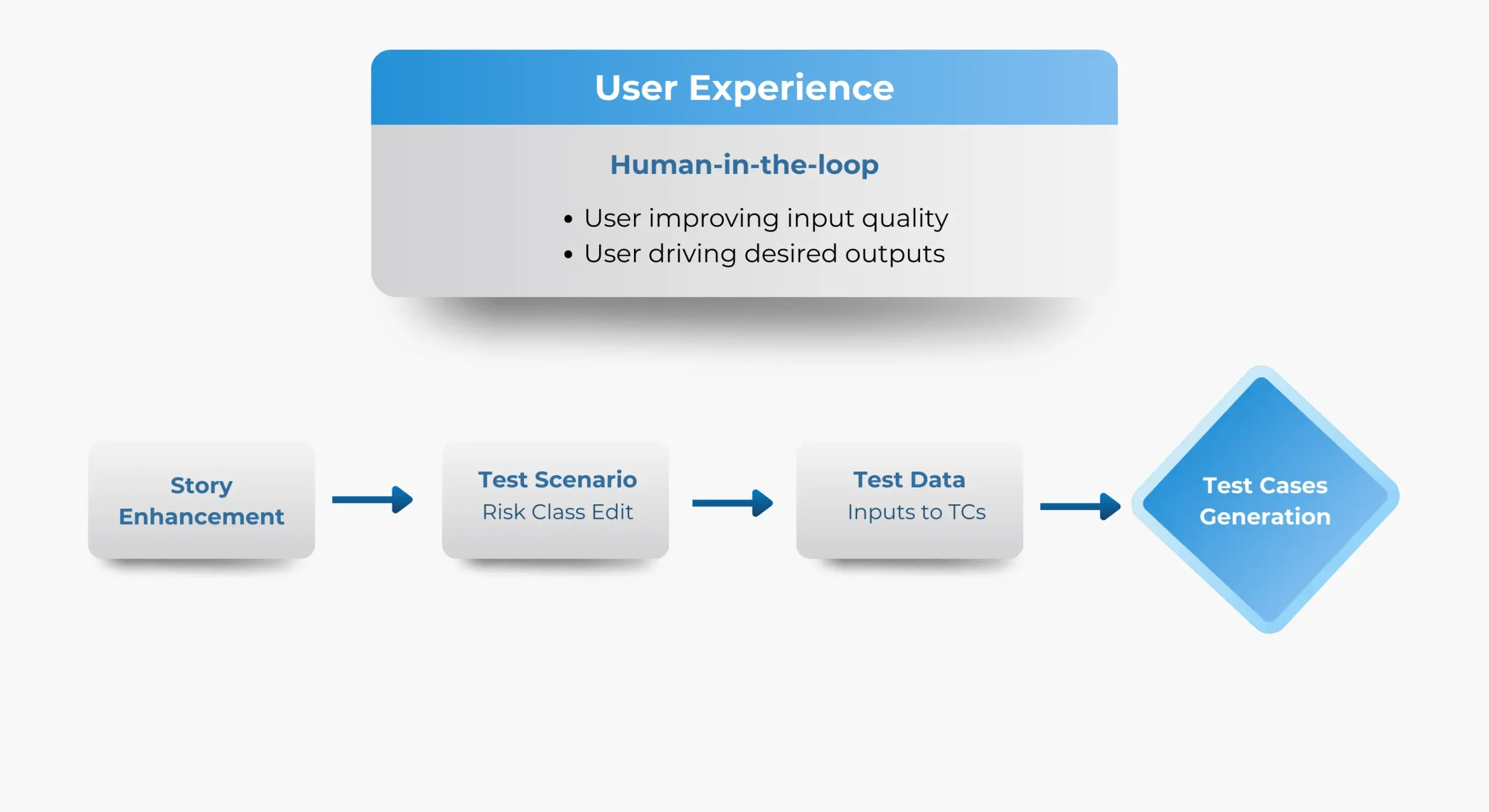

4. Human-in-the-Loop Control

AI accelerates test authoring, but humans retain ownership. QA engineers review, refine, and approve outputs, ensuring accountability while multiplying productivity.

AI acts as an assistant, not an autonomous decision-maker.

Example: In a large enterprise workflow and compliance platform engagement, testers adjusted risk severity for critical workflows before finalizing test suites. This human oversight improved confidence without slowing delivery.

Impact on Manual Testing

Why Structured AI Improves Manual Testing Outcomes

When structured AI is applied to manual test authoring, teams consistently observe:

- Faster test authoring without coverage loss

- Consistent test quality across teams

- Better handling of continuous requirement change

- Reduced rework when stories evolve

- Clear visibility into risk and coverage gaps

This strengthens both manual testing today and automation readiness tomorrow, since automation depends on well-designed tests.

Conclusion

Conclusion: Design First, Generate Second

AI can generate test cases instantly, but without structure, it merely accelerates poor design. Structured AI brings testing back to fundamentals: context, methodology, transparency, and governance.

The real advantage of AI in QA lies not in automation alone, but in engineering better test design at scale.

Still Relying on Ungoverned AI for Test Design?

Explore how Indexnine enables structured, AI-driven test authoring for enterprise QA teams with transparency, governance, and predictable outcomes.